Memory Consistency Checks and ZKVM.

Memory consistency checks (MCC) are an essential part of any zkVM (zero-knowledge virtual machine). However, most of the time, they hide behind the scenes as an unseen but vastly important implementation detail. It would not be surprising if you've never even heard of them! In this article, I will explore what they are, why we need them, and the state-of-the-art techniques.

Academic research on memory consistency did not originate for web3 or even for more general zero-knowledge proof techniques. Initial researchers such as Blum et al. were actually interested in a setup where a verifier had limited memory resources but wanted to maintain larger memory by using an untrusted, computationally powerful third party. This makes sense, as the computational resources of a single machine in the mid-90s were fairly limited. Outsourcing some memory-intensive computations seemed like an ideal solution in an emerging, internet-connected world.

In 2024 and likely beyond, the decades-long work is being reused and repurposed for the very specific task of creating an efficient and trustworthy zkVM. If a program's execution trace is run in a circuit, we also need a way to verify that the memory addresses accessed with reads and writes were done so in a consistent manner. Otherwise, an adversarial prover could change values and create a fake proof. This is where MCC comes in. It prevents an adversarial prover from cheating during the zkVM process. But how is MCC done? A recent paper classifies MCC into two separate categories: first, the sorting algorithm category, and second, the 'address cycle method'. The naming 'address cycle method' might be better understood in this article as 'the non-sorting' methods. I imagine the authors would object, but I will return to this later.

Is sorting for MCC a straw-man?

Sorting algorithms for MCC serve as a common design component in production-grade systems like Cairo and Miden. The prover takes the full execution and memory trace, sorting them into the order of execution. This newly ordered list is then utilized to verify that memory was consistently managed throughout the entire runtime of the program. In systems like Cairo, ZK-circuits are employed to specifically check whether the address column (α) remains unchanged or increments only by 1 (ensuring continuity). Similarly, they verify that the value (γ) either stays the same or (α) increments by 1 (ensuring single-valuedness). David Wong provides a comprehensive blog post and video detailing these aspects, which I recommend checking out if you're interested.

Great! Why did we not all just use this paradigm? 'Sorting MCC' requires redundancy. The sorted trace is derived from the original trace. These need to remain consistent requiring further in-circuit costs. They also increase communication costs. Sorting systems are history dependent, meaning that we need to know either the full trace and/or each previous row depends on all previous rows behind it. This complicates things when stopping or starting a proof (like in IVC), and in parallelization. It seems that Sorting MCC is a straw-man just ready to get blown over by something better.

Permutation checks without re-ordering.

This idea originates from the 1990s paper cited above, offering a way to implement MCC without the added complexity of resorting. It also turns out to be an ideal candidate for history-independent and space-efficient ZKP techniques, such as the 'folding scheme.' The concept is straightforward: start with a table and initialize it with a set (value, # of read_count: initially zero) for each item. When the cell is read, the verifier requests a new write with the tuple (value, read_count + 1). If the prover attempts to cheat, the subsequent write request following the read ensures that the value is always updated to what was provided by the prover. In principle, the prover could manipulate the verifier to write incorrect entries for both the value and the read_count. However, when the full process is complete, one final read operation is performed, and this time, there is no subsequent write operation.

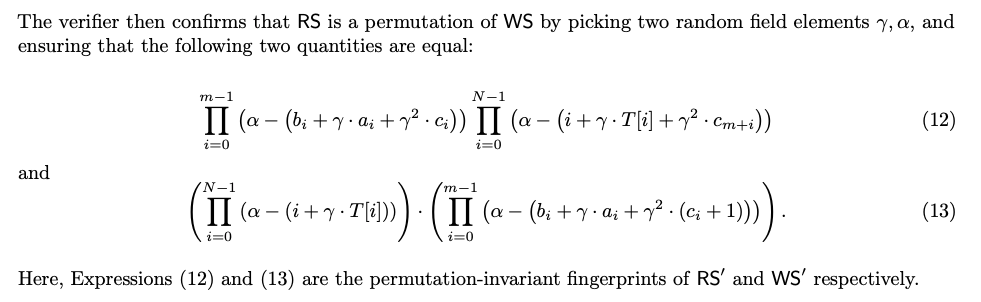

But wait, where is the permutation check? In practice their are two tables: R for read, and W for write. W is initialized with tuples (a (address as Field element), v (value as field element), c (counter as field element)). All counters start as zero as before. R gets new values added on each read operation: this is simply storing what is returned from W. Each read operation is immediately followed by a write operation with the tuple (a, v, c+1). After the smoke clears, one final read operation is done for each row without a corresponding write operation. If the prover was honest R and W should be permutations of each other, which means they should hold the same values.This will likely not immediately click, so please take a moment and think it though. Since W has values on init and R does not, the final read would ensure that R == W if no cheating occurred.

Great! Now in both methods for MCC we want to remove the complexities of tuples and make our operations on single field elements. The way we do this differs a bit in MCC methods. Cairo for example uses random linear combinations where the permutation check without re-ordering approach uses this with reed-solomon fingerprinting, which may be new to the reader. Fingerprinting techniques are well known but out of scope for this article: (please use Google-sensei), they allow you to take elements and return a single unique value with very low probability of a collision. The verifier decides on two random values α (alpha) and γ (gamma). Then rather than having a tuple for each row, a reed-solomon fingerprint is created like so: RS[i] => γ . a[i] + γ . v[i] + γ^2 . c[i]

Using this the final check is a grand-product argument for RS and WS that can be done in an incremental fashion (ideal for memory efficiency). Here is an example snipped from the JOLT paper:

It turns out that this can be reduced even further to one random element (γ) by using LogUp. The basic idea of which is that logarithms can turn multiplication into summations. With all of this said, we are now at what is often considered the state of the art for memory checking procedures.

So what's next?

Permem was introduced late last year. There is a video explaining it in detail against other schemes. It seems to be a non-sorting MCC with a permutation argument. The authors call this the 'address cycle method'.

We think that further reducing the required field elements needed for MCC in practice is a possible route. Particularly as the read_counts may be zero for many elements not accessed (sparse). Moreover, one may be able to group elements based on read_count and fingerprint depending on different assumptions of what is public data. Lastly, we are very excited about permutation checks without re-ordering in light of parallelized IVC techniques. Stay tuned!