What is Automated Reasoning?

The 400-Year Idea That Now Runs AWS Infra at Billion-Query Scale, With ICME's ZK(ARc) Will Power Secure Agentic Commerce At Billion-Transaction Scale.

Automated reasoning is the use of mathematical logic to let computers prove or disprove statements with absolute certainty. Not with 95% confidence. Not with a well-tuned model and crossed fingers. With proof. The kind of proof that runs at AWS handling 34 trillion API calls per day, the kind used in the world's only formally verified C compiler, and the kind that ICME is now combining with zero-knowledge cryptography to produce something that has never existed before: AI guardrails that are not just correct, but succinctly verifiable by any machine in under a second.

That last part is new. That's the breakthrough. We'll get there.

First, let's understand where this technology came from, because this is not a new idea. It is a 400-year-old idea that finally has computers powerful enough to run at scale, and cryptography sophisticated enough to make its proofs portable.

Definition & Origin

When artificial intelligence was formally named as a field at the 1956 Dartmouth Summer Research Project, the founding researchers did not agree on what AI actually was. The conference, organized by John McCarthy at Dartmouth College and funded by the Rockefeller Foundation, brought together a small group that would shape the next 70 years of computing. From the start, three distinct research philosophies emerged.

The first was connectionism: the idea that intelligence could be modeled by simulating networks of neurons. This is the lineage that eventually produced modern deep learning and LLMs. The second was search and heuristics: problem-solving through intelligent exploration of possibility spaces, pioneered by Newell and Simon. The third was symbolic logic: the belief that intelligence is fundamentally about manipulating formal symbols according to precise rules, and that a machine reasoning over logical representations could reproduce human thought. McCarthy himself belonged to this camp.

For decades, symbolic logic and neural networks competed for dominance. Neural networks won the last round decisively — GPT-4 is not a logic engine. But the symbolic camp never disappeared. It went deeper, became more rigorous, and produced the tools that now run at a billion queries a day at AWS. Automated reasoning is what symbolic AI looks like when you take it seriously for 70 years.

Automated reasoning is the branch of artificial intelligence and formal methods focused on using algorithms to reason logically about statements, programs, policies, and systems. Instead of statistical inference (like LLMs) or pattern matching, automated reasoning translates problems into formal logic and uses solvers to determine what is provably true or false.

The output of an automated reasoning system is one of three things:

- SAT: Satisfiable. The solver found a concrete example that makes the statement true and can show you exactly what it is. Not a suspicion, not a probability — a proof by example. If you ask "can an unauthorized user access this data?" and the answer is SAT, the solver hands you the precise combination of inputs that gets them in. That is 100% accurate. The vulnerability definitively exists.

- UNSAT: Unsatisfiable. It is mathematically impossible for this statement to be true under any input, in any scenario, ever. No edge case, no unexpected interaction, no combination of parameters makes it true. 100% accurate in the other direction.

- UNKNOWN: The solver timed out or the problem is undecidable. An honest non-answer rather than a wrong one.

When AWS's Automated Reasoning checks analyze an S3 bucket policy and return UNSAT on "can this bucket be accessed publicly?", they are not telling you the probability of a breach. They are telling you it is logically impossible. That is a categorically different claim than anything a machine learning model can produce.

The field has several branches — SAT and SMT solving check whether logical formulas can be satisfied, model checking exhaustively explores every possible state a system can reach, theorem proving constructs formal mathematical arguments, and abstract interpretation approximates program behavior with mathematical guarantees. The names differ but the property is the same across all of them: the results are provably correct, not probably correct. That single word is what separates automated reasoning from everything else in computer science, and it is why this technology now sits at the center of AI safety.

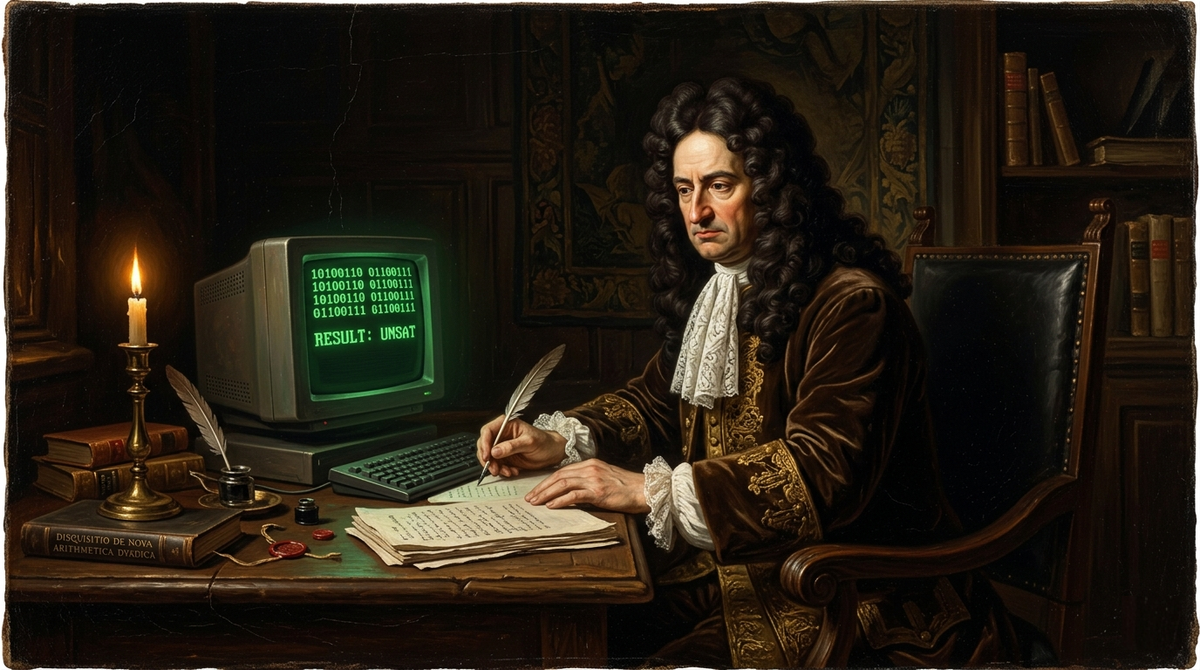

Leibniz Had the Idea. He Just Didn't Have a Computer.

The actual story starts before the Dartmouth Summer Research Project, long before anyone had coined the phrase "artificial intelligence." It starts in the 17th century with a German polymath who was already thinking about machines that could reason.

Gottfried Wilhelm Leibniz described a calculus ratiocinator in the 1670s: a universal formal language and mechanical calculus that could resolve any dispute through pure computation. His vision was that instead of arguing, people would say "let us calculate." He built a mechanical calculator called the Stepped Reckoner that could multiply and divide, the most advanced calculating machine of its era. But what Leibniz really wanted was something deeper, not a machine that crunched numbers, but one that could manipulate symbols according to logical rules and arrive at provably correct conclusions about anything. He understood that if you could formalize the structure of reasoning itself, you could mechanize it. He didn't have the mathematics to build it yet. The idea waited two hundred years.

It is worth pausing on how radical this vision was. In 1670, the dominant view was that reasoning was a uniquely human faculty, something close to divine. Leibniz looked at reasoning and saw an algorithm. That reframing is the intellectual origin of everything in this post.

Gottfried Leibniz also invented binary arithmetic, the representation of numbers as sequences of 0s and 1s — in 1679. He wrote about it in correspondence and eventually published it in 1703. He saw binary as philosophically significant, a connection between mathematical structure and metaphysical concepts of creation. He had no idea he was inventing the native language of every computer ever built. Every time a modern SAT solver manipulates Boolean variables, it is operating in the system Leibniz designed.

The next piece came from George Boole, a self-taught English mathematician who published The Laws of Thought in 1854. Boole's insight was that logical reasoning could be expressed as algebra. Logical statements became equations. AND became multiplication. OR became addition. NOT became subtraction from 1. Truth became 1, falsity became 0. For the first time, logic had the structure of arithmetic, you could calculate your way to a conclusion rather than argue your way there. Boole died at 49, never seeing what his algebra would become. His wife, Mary Everest Boole, later wrote extensively about mathematics education. Their daughter Alicia Boole Stott made significant contributions to four-dimensional geometry. The family had a gift for seeing structure where others saw mystery.

Gottlob Frege took Boole's algebra of logic and extended it into something far more powerful. His Begriffsschrift, published in 1879 and often translated as Concept Script, introduced the first formal system of predicate logic with quantifiers and variables. Before Frege, you could not write "for all x, if P(x) then Q(x)" in a rigorous formal language. After Frege, you could. Sentences about the world could be encoded precisely, unambiguously, in a notation that preserved their logical structure while stripping away the ambiguities of natural language. Frege wanted to provide rigorous logical foundations for arithmetic. Bertrand Russell discovered a fatal paradox in Frege's original system, the set of all sets that don't contain themselves; and Frege received Russell's letter pointing this out just as his second volume was going to press. He added a devastated appendix acknowledging the flaw. It was one of the more painful moments in the history of mathematics. But the core of Frege's predicate logic survived the paradox and became the language in which automated reasoning is still conducted today.

Alfred North Whitehead and Bertrand Russell then spent a decade attempting to rebuild mathematics on unassailable logical foundations, producing the three-volume Principia Mathematica between 1910 and 1913. They succeeded in deriving substantial portions of mathematics from pure logic, but at enormous cost. The proof that 1 + 1 = 2 appears on page 362 of the first volume. The notation was so laborious that few mathematicians actually read it. Still, it established something important: formal systems were powerful enough to express mathematics, and manipulating symbols within them was a well-defined mechanical process. A machine could, in principle, follow the rules.

Then came the hard truth, in two stages.

Kurt Gödel proved in 1931, at age 25, in his doctoral dissertation: that any formal system powerful enough to express basic arithmetic must contain true statements that cannot be proven within that system. This was the first incompleteness theorem. His second theorem proved that such a system cannot prove its own consistency. Together they demolished Hilbert's program, the dominant mathematical project of the early 20th century, which had aimed to establish a complete, consistent, decidable foundation for all of mathematics. Gödel's proof was so unexpected and technically subtle that some of the greatest mathematicians alive initially refused to believe it was correct. John von Neumann, who was in the audience when Gödel first presented the result, grasped its significance immediately and spent the next weeks working through the implications. He later said it was one of the most important mathematical results he had ever encountered.

Alan Turing and Alonzo Church independently proved in 1936 that the general Entscheidungsproblem, Hilbert's question of whether any mathematical statement could be mechanically decided, is undecidable. Turing did it by inventing the Turing machine, an abstract model of computation that defined what it means for a problem to be computable at all. Church did it through lambda calculus. Both proofs arrived at the same conclusion: there exist well-posed mathematical questions that no algorithm can answer in general.

This is the moment where most people would give up on the whole project of mechanizing reason. The field did the opposite. It took the undecidability results as a map rather than a stop sign. Yes, the fully general problem is undecidable. But the undecidability proofs are precise — they tell you exactly where the limits are. Everything inside those limits is fair game. And it turned out that the practical problems worth solving largely live inside those limits.

Cloud security policies, financial transaction rules, AI guardrail enforcement, authorization logic: all decidable. All provable. The universe of problems that automated reasoning can handle with certainty is vastly larger than the universe of problems it cannot. Gödel and Turing didn't close the door. They drew the blueprint.

1956 - The First Machine That Could Reason

The Logic Theorist, built by Allen Newell, Herbert Simon, and J.C. Shaw in 1956, proved 38 of the first 52 theorems in Principia Mathematica. Some of its proofs were more elegant than Russell's originals. It was demonstrated at the Dartmouth workshop that named "artificial intelligence." It proved that machines could do genuine mathematical reasoning.

What followed was decades of foundational work:

Martin Davis and Hilary Putnam built the first practical satisfiability algorithm (1960). DPLL (Davis-Putnam-Logemann-Loveland, 1962) added backtracking with unit propagation. It is still the foundation of modern SAT solvers, 60 years later.

J. Alan Robinson's resolution principle (1965) was the real breakthrough for theorem proving: a single complete inference rule for first-order logic, combined with practical unification. Robinson gave machines the ability to reason about general statements. This paper is the foundation of both automated theorem proving and logic programming.

Alain Colmerauer built Prolog at Aix-Marseille in 1972, making Robinson's resolution a practical programming paradigm. Logic became code.

The Interactive Proof Assistant Era

A parallel research line was building tools where humans and machines collaborate on proofs.

Robin Milner's LCF system at Edinburgh introduced the revolutionary idea that a theorem is an abstract data type whose only constructors are valid inference rules. If you can only build a value of type theorem by applying sound inference rules, then any value of that type is a valid proof. Soundness guaranteed by the type system. Milner also invented ML as the programming language to write proof tactics in. This LCF architecture became the foundation for essentially every proof assistant that followed.

Thierry Coquand and Gérard Huet developed Coq (now Rocq as of 2025) at INRIA starting in 1984. It won the ACM Software System Award in 2013. It is the tool in which CompCert, the world's only formally verified C compiler, was built. Xavier Leroy's CompCert is mathematically proven to never miscompile your code. When an independent study generated over 325 bugs in GCC and LLVM through random testing, CompCert had none.

Isabelle (Lawrence Paulson, Tobias Nipkow) followed. Lean 4 (Leonardo de Moura, Microsoft Research, 2021) has become the central ecosystem for AI-assisted theorem proving. Every major AI math breakthrough in the last two years uses it.

BDDs, Model Checking, and the Industrial Revolution

The late 1980s and 1990s brought formal methods to hardware verification at real scale.

Randal Bryant's 1986 paper on ordered Binary Decision Diagrams introduced a canonical, compact representation for Boolean functions. Bryant's paper holds the highest citation count in the Citeseer database. When you can represent a Boolean function canonically, you can check equality and satisfiability efficiently. Hardware verification changed immediately.

Edmund Clarke and E. Allen Emerson (1981) and independently Jean-Pierre Queille and Joseph Sifakis (1982) invented model checking: algorithmic verification of finite-state systems against temporal logic specifications, with automatic counterexample generation. Systematically explore every possible state of a system. Verify properties hold in all of them. Clarke, Emerson, and Sifakis received the ACM Turing Award in 2007.

When Burch, Clarke, McMillan, and Dill combined BDDs with model checking in 1990 in "Symbolic Model Checking: 10²⁰ States and Beyond," they enabled verification of systems with state spaces larger than the number of atoms in the universe. Hardware companies discovered that testing wasn't sufficient: state spaces were simply too large.

"Ten years ago, researchers into formal methods (and I was the most mistaken among them) predicted that the programming world would embrace with gratitude every assistance promised by formalisation to solve the problems of reliability that arise when programs get large and more safety-critical... It has turned out that the world just does not suffer significantly from the kind of problem that our research was originally intended to solve." - Tony Hoare 1995

The $475 Million Bug That Changed Everything

There is a particular kind of disaster that only becomes visible in retrospect. Not the catastrophic failure that announces itself with fire or collapse, but the quiet one, the subtly wrong answer that passes every test because no one thought to ask the right question. The Pentium FDIV bug was that kind of disaster, and it cost Intel what would be over a billion dollars in today's money.

In 1994, Intel shipped the Pentium processor with a flaw buried inside its floating-point division unit. The SRT division algorithm relied on a lookup table, and five entries in that table were missing. The result was that certain division operations returned answers that were almost correct. Not dramatically wrong, not the kind of wrong a sanity check catches. Subtly, quietly, convincingly wrong, in the fifth or sixth decimal place, in a narrow band of inputs that most users would never encounter.

Professor Thomas Nicely at Lynchburg College encountered them. He was doing number theory research and noticed inconsistencies he could not explain. He traced them to the chip. He posted about it online in November 1994, and within days the story had escaped into the mainstream press. What made the aftermath so damaging for Intel was not the bug itself but the disclosure. The company had independently discovered the flaw months earlier and decided not to tell anyone. When that became public knowledge, IBM halted Pentium sales. Engineers and researchers who had tolerated the bug as a minor inconvenience became angry about the concealment. Intel's initial position, that the flaw was minor and replacements would only be offered to users who could demonstrate a genuine need, lasted about three weeks before public pressure forced a full no-questions-asked recall. The final bill was 475 million dollars, a figure that translates to roughly 1.03 billion today.

The industry's response was not simply to test more carefully. The lesson was more fundamental than that. Testing, however thorough, samples a finite number of inputs from an infinite space of possibilities. The Pentium division unit had been tested extensively. The problem was that testing could not reach every possible input combination, and the missing lookup table entries lived in a corner of that space that the tests never visited. What the industry needed was a method that did not sample the space but reasoned about it completely. What it needed was formal verification.

Intel hired extensively from model checking and theorem proving communities. Engineers who had spent their careers in academic research found themselves with production mandates and serious resources. Edmund Clarke's former student demonstrated that Word Level Model Checking could have found the FDIV error before the chip was ever manufactured. The bug was detectable with tools that already existed in 1994. By the time Intel released the Nehalem microarchitecture in 2008, formal verification had become the primary validation method across the company, not a supplement to testing but a replacement for large classes of it. Intel published work describing how roughly 85 engineers were trained in formal methods through dedicated internal programs. The Pentium FDIV bug did not just cost Intel money. It reorganized an industry's relationship with proof.

The SMT Revolution That Made SAT Smarter

For a long time, the central tool of automated reasoning was the SAT solver, a program that takes a formula in propositional logic and determines whether any assignment of true and false values to its variables can make it satisfied. This is a deceptively simple description of a problem that turns out to be NP-complete, meaning that in the worst case its difficulty scales exponentially with the size of the formula. And yet, through a series of algorithmic innovations in the 1990s and early 2000s, SAT solvers became fast enough to handle industrial-scale problems with millions of variables. The key insight was not brute force but intelligence about failure.

The GRASP system, developed by João Marques-Silva and Karem Sakallah in 1996, introduced conflict-driven clause learning. When a SAT solver reaches a contradiction during search, instead of simply backing up and trying something else, GRASP analyzed the contradiction, identified which earlier decisions had caused it, and learned a new rule that would prevent the same mistake in any future search. This turned the solver's failures into knowledge. The Chaff solver from Princeton in 2001 added watched literals and a decision heuristic called VSIDS that prioritized variables that had appeared in recent conflicts. The combination made SAT solving dramatically faster on the kinds of structured problems that appear in hardware verification and software analysis. MiniSat, released around 2003, compressed these ideas into roughly two thousand lines of C++ and became the reference implementation that a generation of researchers extended and built upon.

But SAT solvers reason only about Boolean variables. They can tell you whether a circuit computes the right value or whether a policy is satisfiable, but they cannot natively reason about integers, or strings, or arrays, or the arithmetic that appears everywhere in real programs and real policies. The field's response to this limitation was Satisfiability Modulo Theories, or SMT: a framework that extends SAT solving with specialized decision procedures for different mathematical domains, letting a SAT core handle the Boolean structure of a problem while handing off subproblems to theory-specific solvers that understand arithmetic, or bit vectors, or arrays. The two components communicate through unit propagation and theory lemmas, cooperating to solve problems that neither could handle alone.

The tool that made SMT practical at industrial scale was Z3, built by Leonardo de Moura and Nikolaj Bjørner at Microsoft Research and first released in 2007. Z3 supports arithmetic, bit-vectors, arrays, strings, and quantified formulas. It won four first places at the SMT-COMP competition in its debut year. It received the ACM SIGPLAN Programming Languages Software Award in 2015. It is today the most widely deployed SMT solver in the world, underlying tools from AWS's Zelkova to Facebook's Infer to an enormous range of academic verification systems. The other major solver ecosystem, CVC5, descends from work at Stanford. An early collaboration between Amazon researchers and the Stanford team working on CVC5's predecessor contributed directly to the solver infrastructure that now processes a billion automated reasoning checks at AWS every day.

Formal Verification Reaches Software

The move from hardware to software was not straightforward. Hardware circuits have finite state spaces and well-defined specifications. Software runs on general-purpose machines, manipulates unbounded data structures, and interacts with environments that cannot be fully anticipated. The state spaces involved are not large but infinite. Exhaustive checking of the kind that worked for circuits was simply not available.

The approach that made software verification tractable was abstract interpretation, developed by Patrick and Radhia Cousot and introduced at the Programming Language Design and Implementation symposium in 1977. The core idea is deliberate, principled approximation. Instead of tracking the exact values that program variables can take during execution, you track an over-approximation: a set of values guaranteed to contain the true set. If you can prove that no execution within the over-approximation violates a property, then no real execution can either. The approximation is sound by construction. The Cousots built a mathematical framework for reasoning about which approximations are valid and how to compose them, and their students and collaborators spent the following decades turning that framework into practical tools.

The most striking application was Astrée, an abstract interpretation-based analyzer developed at ENS Paris in the early 2000s. Airbus tasked the team with analyzing the primary flight control software for the A340 and A380, 132,000 lines of C code responsible for keeping aircraft in the air. Astrée proved the complete absence of runtime errors in that code. No array out of bounds. No integer overflow. No null dereference. Zero false alarms, on a complete analysis, in 80 minutes of computation time. Airbus subsequently used the same approach on the Jules Verne automated transfer vehicle, which docked with the International Space Station in 2008. The software that guided that docking had been proven correct.

Microsoft's contribution to software verification came through a different route. The SLAM project, begun by Thomas Ball and Sriram Rajamani around 2000, used symbolic model checking combined with counterexample-guided abstraction refinement to check whether Windows device drivers respected the rules of the kernel interfaces they called. Drivers were the source of an enormous fraction of Windows crashes, and the volume of third-party driver code made manual review impossible. SLAM automated the checking and became the Static Driver Verifier, shipped with the Windows Driver Development Kit and required as a certification gate for kernel drivers. Bill Gates highlighted the project at WinHEC 2002. The second version of the tool reduced false alarm rates to under five hundredths of a percent on KMDF drivers, meaning that when it flagged a bug, the bug was almost certainly real.

The seL4 microkernel, developed at NICTA and Data61 in Australia and presented at the SOSP conference in 2009, represented something more ambitious than any of these projects. The team, led by Gerwin Klein and Gernot Heiser, produced a formal proof of functional correctness for a complete operating system kernel, 8,700 lines of C verified in the Isabelle proof assistant over roughly twenty person-years. The proof does not merely establish the absence of specific bug classes. It establishes that the kernel correctly implements its specification in every possible execution, under every possible sequence of inputs. This is a categorically stronger claim than anything testing can make, and it required an effort comparable to a significant engineering project in its own right.

Jean-Raymond Abrial's B method produced a different kind of landmark. The Paris Metro Line 14, opened in 1998, was the first fully automated metro line in the world. Its safety-critical control software was developed entirely through formal specification and proof using the B method. After the proofs were complete, zero bugs were discovered in the software. It ran at version 1.0 for nearly a decade without a single modification. In an industry where software systems routinely accumulate patches and hotfixes from the day they ship, this was remarkable enough to be cited in formal methods literature for years afterward.

In cryptography, Project Everest, a collaboration between Microsoft Research and INRIA, produced HACL*, a suite of verified cryptographic implementations covering elliptic curve operations, hash functions, and authenticated encryption. HACL* is deployed in Firefox and the Linux kernel. TLS 1.3, the current standard for encrypting internet traffic, was the first major security protocol whose design process incorporated formal analysis as a first-class requirement rather than an afterthought. The tools and the cultural shift that made that possible were both products of decades of work in the formal methods tradition.

AWS Does A Billion SMT Queries a Day

Byron Cook founded the AWS Automated Reasoning Group around 2014. Cook's background: a decade at Microsoft Research co-developing SLAM and creating the TERMINATOR termination prover. He now holds the title of Vice President and Distinguished Scientist at Amazon while maintaining a professorship at University College London, and since October 2024 has served part-time as Program Manager at DARPA's Information Innovation Office.

AWS leadership now describes this as a "Golden Age" for automated reasoning. The scale is staggering.

Zelkova translates AWS access control policies (IAM, S3) into SMT formulas and runs a portfolio of Z3 and CVC5 solvers in parallel to verify whether one policy is strictly less permissive than another. This is a PSPACE-complete problem. Zelkova solves it in milliseconds to seconds and processes over one billion SMT queries daily. The CAV 2022 invited paper was literally titled "A Billion SMT Queries a Day." Zelkova powers IAM Access Analyzer and S3 Block Public Access.

Tiros encodes the semantics of the entire AWS network stack into logic: every VPC, subnet, security group, routing table, and network ACL. Using Soufflé (Datalog), MonoSAT (SMT), and Vampire (theorem prover), it performs static network reachability analysis at scale. Tiros powers VPC Reachability Analyzer and Amazon Inspector's network security features.

s2n-TLS, AWS's open-source TLS implementation, has proofs of HMAC, DRBG, and the TLS handshake state machine established through Cryptol, SAW, and Coq, with SideTrail verifying timing side-channel resistance. Proofs are automatically re-verified on every code commit in CI/CD. The CAV 2018 paper "Continuous Formal Verification of Amazon s2n" described how verification becomes sustainable in practice: integrate it into the development pipeline and treat proof failures exactly like test failures.

Cedar, the policy language open-sourced in 2023, is AWS's most complete example of verification-guided development. The authorization engine is formally modeled in Lean 4 with proved authorization theorems, validation soundness, and symbolic compilation correctness. The production implementation is in safe Rust. Differential random testing runs millions of inputs through both the Lean model and the Rust code continuously. Cedar powers Amazon Verified Permissions and AWS Verified Access.

The Cloud Infra AR crown jewel: the formally verified IAM authorization engine, described in a 35+ author paper at ICSE 2025. Over four years, the team rebuilt the engine that processes one billion API calls per second in Dafny, proving functional equivalence to the predecessor system. The result was a 3x performance improvement, achieved by building the most safety-critical system at AWS on formal foundations. Verification did not slow things down. It sped them up.

Automated Reasoning checks (ARc) in Amazon Bedrock Guardrails, announced at re:Invent 2024, brings this capability to AI: domain knowledge is expressed as formal logic and AI outputs are checked against it with a solver. AWS claims up to 99% verification accuracy in detecting AI hallucinations. For domains with fully specified rules, you can reach 100%.

Why Is AWS So Far Ahead?

The honest answer is: deliberate, decade-long investment driven by existential motivation.

When you are securing the cloud infrastructure that runs a significant fraction of the internet, informal methods carry catastrophic risk. A misconfigured IAM policy does not just affect one customer. A network reachability bug does not just affect one service. The scale of AWS's infrastructure means that formal correctness is not a nice-to-have. It is the only viable architecture for security at cloud scale.

AWS made the unusual decision to hire world-class academic researchers (Cook, Leino, Torlak, Rungta, Hicks) and give them a mandate to build production systems, not just publish papers. The result is a decade of compounding investment: tools that have been running at billion-query scale for years, with engineering culture and institutional knowledge that cannot be replicated quickly.

What Are the Other Cloud Providers Doing?

Microsoft Azure has deep roots in formal methods through Microsoft Research, which invented Z3 (the SMT solver at the heart of most automated reasoning systems globally) and Dafny (the verified programming language AWS used to rebuild its authorization engine). Microsoft uses TLA+ extensively for distributed systems design internally, has published formal specifications of Azure Cosmos DB consistency levels, and Microsoft Research produced the Infer static analyzer used by Meta. These are genuine technical contributions. What Microsoft has not done is ship a customer-facing cloud product equivalent to Zelkova, Tiros, or ARc. The formal methods expertise lives in Microsoft Research and internal engineering teams. It has not been productized in the same way. Azure's AI safety story is primarily LLM-based content filtering and observability tooling, not formal verification.

Google Cloud has significant formal methods research through Google DeepMind (AlphaProof, AlphaGeometry) and has used model checking internally for distributed system design. Google researchers have contributed to cryptographic verification and protocol analysis. Again: none of this has been productized as a cloud security service. Google's AI safety work with Gemini is probabilistic guardrailing, not formal verification.

The gap is not technical capability. Microsoft literally invented the key tools. The gap is productization, deployment scale, and the decade of operational learning that comes from running formal verification at a billion queries a day. AWS doesn't just have the tools. They have the accumulated operational knowledge of what breaks, how to tune solvers for production workloads, and how to make formal verification feel like a feature to cloud customers rather than an academic exercise.

AWS's Rod Chapman put it directly: "A key point is that AR builds trust with customers by allowing universal and unconditional statements about system behavior. Not 'we tested this and it appears to work.' We proved it cannot fail in this way."

LLMs and Formal Proof: The Convergence Is Happening Fast

For most of its history, automated reasoning has had a labor problem. The tools were sound. The theory was solid. But using them required the kind of specialized training that took years to acquire and produced, in practice, a global community of perhaps a few thousand people who could write formal proofs with any fluency. The seL4 verified microkernel took twenty person-years. CompCert took longer. Every landmark achievement in the field came with a footnote about the extraordinary human cost of producing it. This was not a criticism of the research. It was simply the reality of what mathematical proof demands from the people who write it.

That bottleneck is now breaking open, and the force breaking it is the same one reorganizing nearly every other knowledge-intensive field: large language models that have, somewhat unexpectedly, turned out to be remarkably good at mathematics.

The combination works because the two technologies fail in opposite directions. A language model generates mathematical intuition fluidly — it can propose a proof strategy, identify a useful lemma, decompose a hard problem into tractable subproblems, and do all of this in seconds across a domain that would take a human expert hours to navigate. What it cannot do is guarantee correctness. It hallucinates steps. It elides details. It produces arguments that look right and are subtly wrong in ways that matter enormously when the thing you are verifying controls an aircraft or a financial system. A formal proof assistant, by contrast, accepts nothing on faith. Every step must be explicitly justified. Every inference must follow from prior results by rules the system can mechanically check. It is incapable of creative leaps and incapable of mistakes. The pairing of these two properties, one system that can guess brilliantly and one that can verify absolutely, is turning out to be more than the sum of its parts.

The benchmark results have arrived with a speed that surprised even researchers in the field. Google DeepMind's AlphaProof, published in Nature in November 2025, combines a fine-tuned Gemini model with AlphaZero-style reinforcement learning, training not on games but on millions of theorems in Lean. At the 2024 International Mathematical Olympiad, the system solved four of six problems. By 2025, an advanced version of Gemini operating with what DeepMind calls Deep Think achieved gold-medal standard on the same competition, solving five of six problems within the four-and-a-half-hour time limit and working entirely in natural language. The IMO is not a test of computation. It is a test of mathematical creativity, the ability to see a structure that is not obvious and construct an argument that no one has written before. The fact that a machine can now do this at gold-medal level is a genuine discontinuity in the history of the field.

Apple's Hilbert framework reached 99.2% on miniF2F, which has served as the standard benchmark for formal mathematical reasoning since its introduction. That number is close enough to saturation that the community has largely moved on to harder problems. DeepSeek-Prover-V2, a 671 billion parameter model released as open source, reached 88.9% on the same benchmark. Harmonic AI, a startup that raised a hundred million dollars in 2025, is building what it describes as hallucination-free AI, with Lean 4 as the formal backbone that makes that claim mean something. OpenAI's o3-mini, integrated with the Z3 SMT solver, achieved complete coverage on the Code2Inv loop invariant benchmark, a problem set that had resisted prior systems for years. Microsoft's SAFE framework, using the Verus verifier and a self-evolving LLM approach, produced over nine thousand verified Rust functions with minimal human intervention.

What is happening here is structural, not merely impressive. For decades the bottleneck in formal verification was the human being at the keyboard, the expert who had to translate intuition into proof steps and then check every one of them. Language models are replacing that bottleneck with something that works at a different speed. The analogy that comes to mind is what calculators did for arithmetic: they did not make arithmetic less important, or make the underlying mathematics easier, but they removed the drudgery that had previously consumed most of the effort, freeing the mathematician to work at a higher level of abstraction. Lean 4 is becoming, in this sense, the programming language of rigorous AI, the substrate on which a new generation of tools is learning to think carefully.

ZKP's Succinct Verification For AI Guardrails: Our Superpower That Changes Everything

This is where ICME Labs is pioneering.

Automated reasoning at AWS produces proofs. Large, computationally expensive proofs. Verifying one of those proofs requires re-running the solver. The policies themselves may be proprietary. And in a world where AI agents transact autonomously at machine speed, the chain of verification breaks: you cannot call back to a central solver on every transaction, you cannot expose your policy rules to counterparties, and you cannot wait for a human to review a dashboard alert.

This is the problem that zero-knowledge proofs were built to solve. And it's the problem that ICME has cracked open.

Succinct verification is the superpower of ZKP systems. A zero-knowledge proof can compress an arbitrarily complex computation into a tiny cryptographic artifact that anyone can verify in under a second, on any hardware, without re-executing the original computation and without seeing any of the private inputs.

This is not a marginal improvement in speed. It is a qualitative shift. A proof that took minutes to generate can be verified in milliseconds. A proof of a policy that must stay confidential can be verified without revealing the policy. A proof generated by infrastructure you do not control can be verified without trusting that infrastructure.

ICME's approach wraps the Automated Reasoning process in a zero-knowledge proof using Jolt Atlas, our zkVM adapted from a16z Crypto's JOLT proving system, achieving 3-7x speed improvements over competing zkML implementations. The result is a cryptographic receipt that proves:

- Which specific guardrail model executed

- That execution was faithful to the model's exact weights and architecture

- That the formal policy check ran correctly to completion

- The result of that check (SAT or UNSAT)

- All of this without revealing the underlying policy

Any machine can verify this receipt in milliseconds. An AI agent can verify another AI agent's compliance cryptographically, with no trust in the infrastructure, no exposure of proprietary rules, and no human in the loop.

This is what we call ZK(ARc): zero-knowledge proofs over Automated Reasoning checks. It is not a wrapper around someone else's product. It is a new primitive that did not exist before. The combination of mathematical certainty from formal verification with cryptographic portability from zero-knowledge proofs enables a class of applications that neither technology could provide alone.

Consider what this unlocks:

Machine-speed trustless commerce. When AI agents transact with each other across organizational boundaries, neither party trusts the other's infrastructure. ZK(ARc) lets an agent present a cryptographic proof that its guardrails ran and its rules were satisfied, verifiable by the counterparty's agent in milliseconds. No trust required. No central authority. Just math.

Private policy enforcement. Your guardrail rules may encode proprietary risk models, regulatory thresholds, or competitive intelligence. Today, sharing a proof of compliance means sharing the policy. With ZK(ARc), you prove compliance without revealing the policy. The proof is public. The rules stay private.

Autonomous incident response. When a proof shows a violation, monitoring agents do not need to re-execute traces or wait for human review. They check the succinct proof and respond automatically. The proof itself is the audit trail. No interpretation required.

Composable security. Multiple agents in a workflow can each generate ZK(ARc) proofs for their portion of the execution. A downstream agent can verify the entire chain of custody cryptographically. The security of a multi-agent workflow becomes composable and verifiable end-to-end.

The division of labor is clean. Humans design the rules, train the models, and set the security requirements — the judgment layer that only humans can provide. Automated reasoning enforces those rules through formal logic, deterministically and mathematically, with no drift, no interpretation, and no guesswork. ZK(ARc), what ICME builds, proves that the correct models and rules actually executed, producing cryptographic receipts that any machine can verify in under a second without trusting the party that generated them. And monitoring agents sit at the end of the chain, checking those proofs and triggering responses autonomously at machine speed. No humans watching dashboards. No alert queues. Just math verifying math, all the way down.

Math verifies math, autonomously, without trust, without latency, without a human dashboard.

History Is Clear. Verifiable AI Will Follow The Trend.

Every time computing has entered a new high-stakes domain, informal methods have proven insufficient and formal methods have followed.

Hardware verification in the 1990s after the Pentium FDIV bug ($475 million). Software driver verification in the 2000s after Windows crashes cost Microsoft credibility at scale. Cryptographic protocol verification in the 2010s after TLS vulnerabilities proved that "looks secure" is not the same as "is secure." Cloud security policy verification in the 2020s as AWS scaled to billions of customers and informal policy analysis became untenable.

Each transition followed the same arc: a catastrophic failure proved the cost of informality, formal methods experts moved from academia to production systems, tooling matured, and verification became routine.

Agentic commerce is at the very beginning of that curve right now. AI agents are moving money, executing contracts, and making security decisions at machine speed. The guardrails protecting them are probabilistic filters designed for human-supervised systems with time to investigate anomalies.

The formal verification infrastructure already exists. AWS runs it at a billion queries a day. The research has been published. The tools are production-proven.

The discovery that ICME has made is that zero-knowledge proofs can make those proofs portable, private, and verifiable in under a second by any machine in any environment without trusting any central authority. This is the infrastructure that will secure agentic commerce at billion transaction scale.

ZK(ARc) is the combination that makes machine-speed trustless agentic commerce possible.

At ICME Labs we are making new discoveries at the intersection of automated reasoning and zero-knowledge cryptography. Check out Jolt Atlas and the ICME PreFlight API to start building with succinctly verifiable AI guardrails today. Star and follow if you want to help make agentic commerce mathematically safe.

Technical Founder of ICME Labs. Building the cryptographic layer that makes formal verification portable, private, and verifiable in under a second. Writing at the intersection of AI and cryptography.